In any urban simulation, vehicles alone are not enough.

Cities feel alive when pedestrians behave naturally.

When movement feels believable.

When interaction follows logical rules.

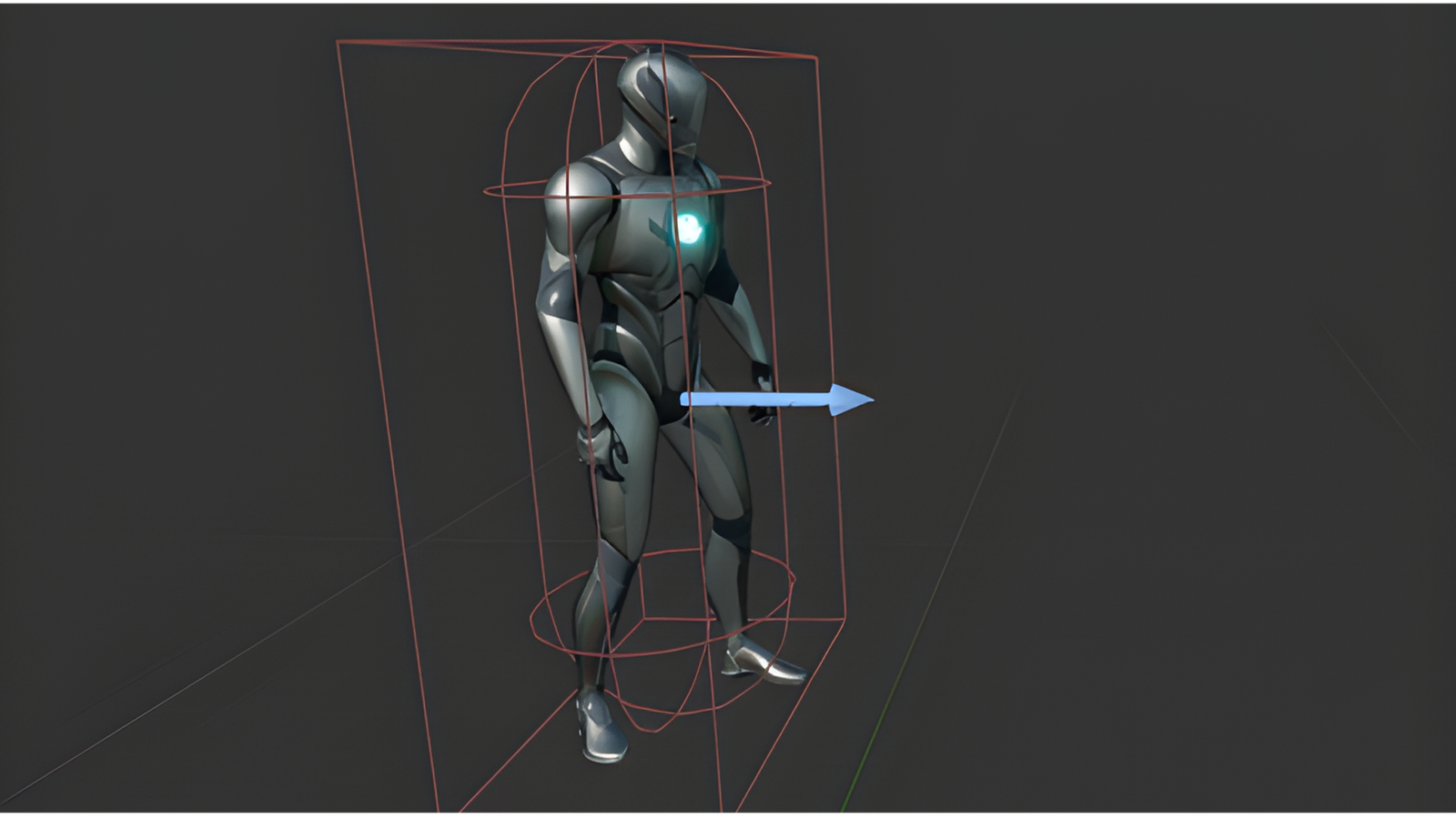

As part of our development pipeline, we built a modular AI pedestrian system inside Unreal Engine 5 designed to be flexible, scalable, and behavior-driven.

The objective wasn’t just movement.

It was systemic integration.

Design Philosophy

Rather than creating a single hard-coded character behavior, we structured the pedestrian as a modular AI framework.

This ensures adaptability across different characters and scenarios without rewriting logic.

The system is:

- Compatible with any character rig fitting the UE5 mannequin structure

- Built for reusability across multiple simulation contexts

- Designed to integrate with environmental and traffic systems

Core Features

The AI pedestrian currently supports:

- Modular Character Integration

Works with any character aligned with the Unreal Engine 5 mannequin skeleton. - Point-to-Point Navigation

Ability to walk toward predefined targets or scripted destinations. - Free Navigation on NavMesh Bounds

Dynamic roaming within defined navigation mesh areas. - Rule-Based Interaction

Reacts to environmental events, including collision logic such as being hit by vehicles.

All movement and interaction logic is driven through Unreal’s AI and navigation systems, ensuring reliable and scalable behavior.

Simulation Impact

Pedestrians are not visual fillers.

They are behavioral components of the environment.

By integrating rule-based interaction and NavMesh-driven movement:

- The environment feels reactive

- Traffic systems gain contextual realism

- Scenarios become more dynamic

This transforms pedestrians from animated assets into active participants within the simulation ecosystem.

Beyond Basic Movement

The system is structured to allow future expansion, including:

- Behavior trees for advanced decision-making

- Context-aware reactions

- State-based animation blending

- Crowd system scalability

Because in real-time urban simulations, realism comes from systems not isolated features.